Tag: waves

-

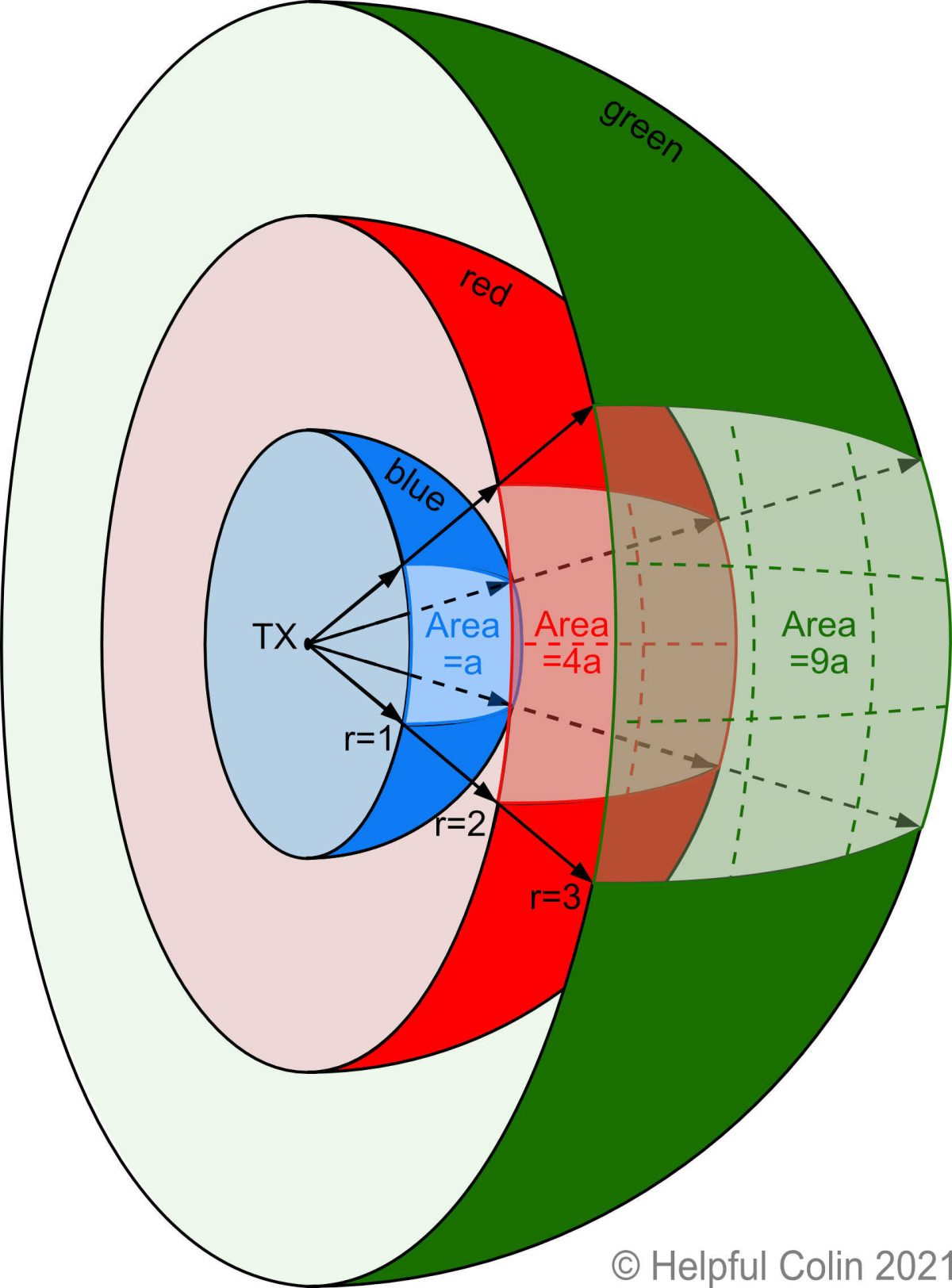

Radio Wave Power And How It Gets Reduced By Distance

Some people are very concerned that radio wave power coming from radio transmitters’ antennas will harm people. In particular people are troubled by mobile phone masts causing long term health problems when they are near to their homes. I just want to make it clear how much a transmitter’s power diminishes as it spreads out…